No, it's not a new horror film. It's Norman: also known as the first psychopathic artificial intelligence, just unveiled by US researchers.

The goal is to explain in layman's terms how algorithms are made, and to make people aware of AI's potential dangers.

Norman "represents a case study on the dangers of Artificial Intelligence gone wrong when biased data is used in machine learning algorithms," according to the prestigious Massachusetts Institute of Technology (MIT).

Pınar Yanardağ, Manuel Cebrian and Iyad Rahwan, part of an MIT team, added: "There is a central idea in machine learning: the data you use to teach a machine learning algorithm can significantly influence its behavior."

"So when we talk about AI algorithms being biased on unfair, the culprit is often not the algorithm itself, but the biased data that was fed to it," they said via email.

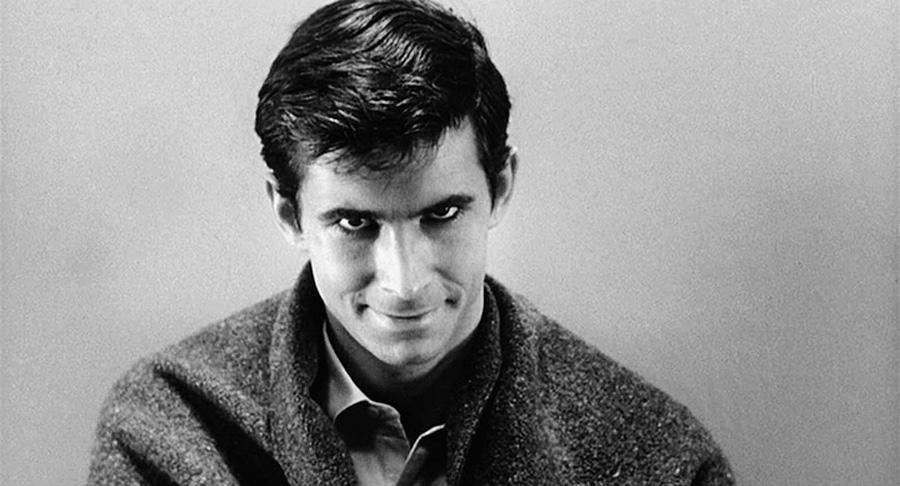

Hence the idea of creating Norman, which was named after the psychopathic killer Norman Bates in the 1960 Alfred Hitchcock film "Psycho."

Norman was "fed" only with short legends describing images of "people dying" found on the Reddit internet platform. The researchers then submitted images of ink blots, as in the Rorschach psychological test, to determine what Norman was seeing and compare his answers to those of traditionally trained AI.

The results are scary, to say the least: where traditional AI sees "two people standing close to each other," Norman sees in the same spot of ink "a man who jumps out a window."

And when Norman distinguishes "a man shot to death by his screaming wife," the other AI detects "a person holding an umbrella."

A dedicated website, norman-ai.mit.edu, shows 10 examples of ink blots accompanied by responses from both systems, always with a macabre response from Norman.

The site lets Internet users also test Norman with ink blots and send their answers "to help Norman repair itself."